Go ↔ Java: Complete Guide to Runtime, Memory, and Allocator - Part 3

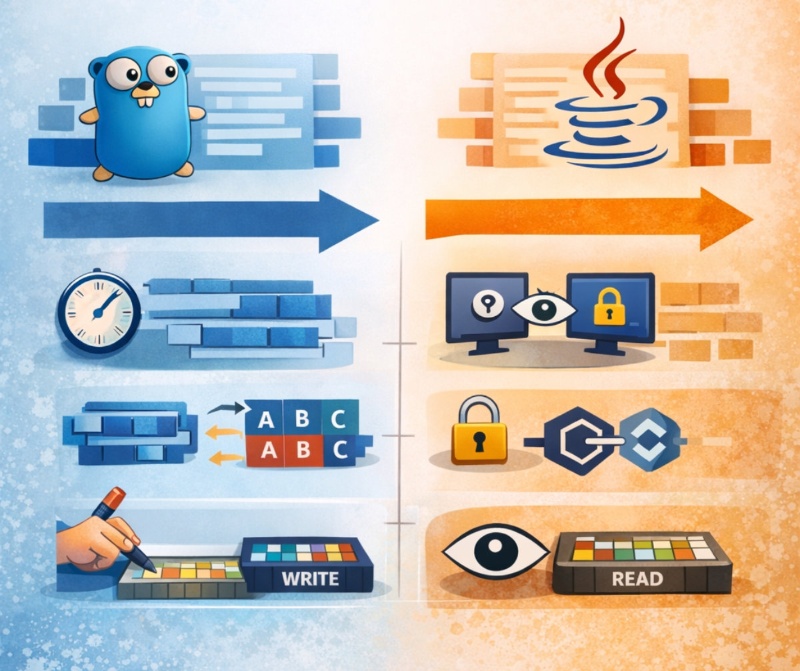

This article is a comprehensive guide to the key aspects of memory and runtime work in Go and Java. We will discuss fundamental concepts: execution scheduler, memory barriers, memory alignment, stack growth, fragmentation, allocation hot spots, and the internal architecture of the Go allocator.

The main goal is to show how the same problems are solved in two languages: Go ↔ Java. This is particularly useful if you are a Java developer learning Go, or a Go developer delving into the JVM.

Runtime Scheduler

Runtime Scheduler - Orchestra of goroutines 🎻⚙️, manages the execution of goroutines. . distributes execution among goroutines

The scheduler is a runtime component responsible for distributing tasks among execution threads. In Java, the model "one OS thread = one Java thread" is used. The JVM delegates scheduling to the operating system, which means behavior depends on the OS. In Go, an M:N scheduler is used: a set of goroutines (G) is distributed across a limited number of OS threads (M) through processors (P).

Under the hood, the Go runtime manages three entities: G (goroutine) — lightweight task, M — OS thread, P — logical processor. The scheduler decides which goroutine to run, context switching is cheap. In Java, however, context switching is more expensive, as it involves system calls.

// Example: starting several goroutines

package main

import (

"fmt"

"time"

)

func worker(id int) {

fmt.Println("Worker", id, "started")

time.Sleep(time.Second)

fmt.Println("Worker", id, "finished")

}

func main() {

for i := 0; i < 3; i++ {

go worker(i) // starting goroutine

}

time.Sleep(2 * time.Second) // waiting for completion

}

// Example: starting threads in Java

public class Main {

public static void main(String[] args) {

for (int i = 0; i < 3; i++) {

int id = i;

new Thread(() -> {

System.out.println("Worker " + id + " started");

try {

Thread.sleep(1000);

} catch (InterruptedException e) {}

System.out.println("Worker " + id + " finished");

}).start();

}

}

}

In Go, try to think in terms of goroutines rather than threads. This is important because the scheduler itself manages task distribution and optimizes switching. In Java, on the contrary, it is necessary to control the number of threads via a thread pool (ExecutorService), otherwise, you can easily create too many threads and overload the system. Under the hood, this is due to the fact that creating a thread in Java is an expensive OS operation, while a goroutine is a structure in Go's runtime memory that takes only a few kilobytes.

Essentially, the Go scheduler is ideally suited for high-load systems: web servers, streaming, processing network requests. For example, an HTTP server in Go can handle tens of thousands of connections. In Java, the equivalent is achieved through NIO and async frameworks (Netty, Spring WebFlux). The advantage of Go is simplicity and scalability. The downside is less control. Java offers more control over threads, but requires more manual configuration. Under the hood, the Go runtime balances the load itself, while in Java the developer often has to handle the configuration of thread pools.

// Go scheduler diagram

G (goroutine) ---\

\--> P (processor) --> M (OS thread) --> CPU

G (goroutine) ---/

Description:

- G — tasks

- P — task queue

- M — actual OS thread

Memory Barriers (Memory Barriers)

Memory Barriers - Memory Order Guardians 🚧🧠, synchronizes read and write operations. . prohibits dangerous instruction reordering

Memory barriers (or memory fences) are mechanisms that ensure the order of execution of read/write operations between threads. In Java, this is part of the Java Memory Model (JMM), where volatile, synchronized and happens-before play a key role.

In Go, the memory model is simpler: synchronization is achieved through channels, mutexes, and atomic operations. Under the hood, both Java and Go use CPU instructions (e.g., mfence) to ensure that operations will not be reordered.

package main

import (

"fmt"

"sync/atomic"

)

var counter int64

func main() {

atomic.AddInt64(&counter, 1) // atomic operation

fmt.Println(counter)

}

import java.util.concurrent.atomic.AtomicLong;

public class Main {

static AtomicLong counter = new AtomicLong();

public static void main(String[] args) {

counter.incrementAndGet(); // atomic operation

System.out.println(counter.get());

}

}

Do not rely on the "intuitive" order of execution of code in multithreading. Compilers and CPUs may reorder instructions. In Go, use channels or sync/atomic, and in Java - volatile or atomic classes. The reason is that without a memory barrier, another thread may see the "old" value of the variable. Under the hood, this is related to CPU caches and compiler optimizations.

Memory barriers are critical in low-level concurrency: caches, counters, lock-free structures. In Java, this is used in ConcurrentHashMap, ForkJoinPool. In Go – in runtime scheduler and sync packages. The plus of atomic operations is high performance. The minus is complexity and risk of errors. Under the hood, atomic operations avoid locks but require strict adherence to memory visibility rules.

Memory Alignment

Memory Alignment - Memory ruler 📏🧩, aligns data for quick access. . places data on memory boundaries

Memory alignment is the placement of data in memory at boundaries that are convenient for the CPU. For example, int64 must be aligned on 8-byte boundaries.

In Go, the developer can influence alignment through the order of fields in a struct. In Java, alignment is hidden within the JVM but affects performance (padding, false sharing).

type Bad struct {

a bool // 1 byte

b int64 // 8 bytes -> will have padding

}

type Good struct {

b int64

a bool

}

class Bad {

boolean a;

long b; // JVM will add padding

}

class Good {

long b;

boolean a;

}

In Go, always arrange struct fields from largest to smallest — it reduces padding and saves memory. In Java, use @Contended (in advanced cases) to combat false sharing. Under the hood, the CPU reads memory in chunks (cache line), and incorrect alignment can lead to additional memory accesses.

Alignment is important in high-performance systems: game dev, trading systems, high-load services. In Go, you can manually optimize structs and reduce memory consumption. In Java, this is less obvious, but important when dealing with multithreading (false sharing). The plus — performance increase, the minus — code complexity. Under the hood, alignment optimization reduces cache misses.

Stack Growth / Shrinkage

Stack Growth / Shrinkage - Stack Breathing 🌬️📚, expands and shrinks under load. . dynamically changes the size of the execution stack

In Java, the thread stack is fixed (usually ~1MB), and a StackOverflowError occurs when it overflows. In Go, the goroutine stack is dynamic: it starts small (~2KB) and grows as needed.

Under the hood, the Go runtime copies the stack to a new area when it grows, which makes goroutines very lightweight. In Java, the stack is allocated upfront and does not change.

package main

func recursive(n int) int {

if n == 0 {

return 0

}

return n + recursive(n-1) // the stack will grow dynamically

}

public class Main {

static int recursive(int n) {

if (n == 0) return 0;

return n + recursive(n - 1); // may cause StackOverflow

}

}

In Go, recursion can be used more safely, but don't abuse it — copying the stack also uses resources. In Java, it's better to avoid deep recursion and use iterations. Under the hood, Go reallocates the stack, while Java cannot do that.

The dynamic stack in Go is useful in systems with many tasks (goroutines). For example, parsers, network services. In Java, a fixed stack is simpler and more predictable but less flexible. The plus of Go is memory savings, the downside is overhead during copying. Under the hood, Go optimizes the stack to support millions of goroutines.

Memory Fragmentation

Memory Fragmentation - Broken memory 🧩💥, free chunks instead of a whole block. . memory is divided into small non-contiguous pieces

Memory fragmentation is a situation where free memory is broken into many small pieces, making it impossible to effectively allocate large blocks. There are two types: internal — when more memory is allocated than needed, and external — when memory is broken into unrelated areas.

In Go, fragmentation is controlled through size classes and a slab-like allocator. Memory is divided into fixed-size blocks, and each object falls into the nearest class. This reduces external fragmentation, but can increase internal fragmentation.

In Java, the JVM uses generational GC (Eden, Survivor, Old Gen), and objects are moved (compaction), which virtually eliminates external fragmentation. However, this leads to GC pauses.

Example: frequent allocations of different sizes (Go)

package main

import "fmt"

func main() {

for i := 0; i < 1000000; i++ {

_ = make([]byte, i%100) // creating arrays of different sizes

// each size falls into its size class

// this can lead to internal fragmentation

}

fmt.Println("done")

}

Example: analog in Java

public class Main {

public static void main(String[] args) {

for (int i = 0; i < 1_000_000; i++) {

byte[] arr = new byte[i % 100];

// the JVM places the object in Eden space

// during GC it may move and "compact" memory

}

System.out.println("done");

}

}

In Go, try to reuse objects (sync.Pool) if you have frequent allocations. This reduces pressure on the allocator and decreases fragmentation. In Java, it is important to minimize the creation of short-lived objects to reduce the load on the GC. Under the hood, Go does not perform memory compaction (unlike the JVM), so fragmentation can accumulate. The JVM, on the other hand, moves objects, which reduces fragmentation but requires stop-the-world (STW).

Fragmentation is especially important in long-running services: backend APIs, streaming, high-load systems. In Go, it can lead to increased memory RSS without real necessity. In Java — to increased GC time. For example, in an image processing service, buffers of different sizes are often created — this is a classic source of fragmentation. The plus of Go is predictability and absence of compaction. The downside is possible "leakage" through fragmentation. In Java, the plus is compact memory, the minus is GC pauses. Under the hood, this is the difference between moving GC (Java) and non-moving allocator (Go).

Allocation Hotspot

Allocation Hotspot - Hot allocation point 🔥📍, a place of frequent memory allocations. . frequently used memory areas for allocation

Allocation hotspot is a section of code where a large number of memory allocations occur. This can become a bottleneck, as allocations are an expensive operation (even with optimizations).

In Go, allocations are optimized through mcache (local caches), but with frequent allocations the load increases. In Java, TLAB (Thread Local Allocation Buffer) is used, where the thread allocates memory without synchronization.

Under the hood, both systems try to make allocation lock-free, but when the local buffers overflow, it leads to access to global structures.

Example hotspot in Go

package main

type User struct {

id int

}

func main() {

for i := 0; i < 1000000; i++ {

_ = &User{id: i} // constant allocations

}

}

Example hotspot in Java

class User {

int id;

User(int id) { this.id = id; }

}

public class Main {

public static void main(String[] args) {

for (int i = 0; i < 1_000_000; i++) {

User u = new User(i); // hotspot allocations

}

}

}

Avoid unnecessary allocations in hot loops. In Go, use value types instead of pointers, if possible. In Java, use object pooling or primitives. The reason is that even fast allocations load the GC/allocator. Under the hood, this leads to frequent access to mcache (Go) or TLAB (Java), and when they overflow — to global locks.

Hotspots often arise in serialization/deserialization, logging, request handling. For example, JSON parsing creates many temporary objects. In Go, this may increase the load on GC, in Java — cause frequent minor GC. Plus of optimizations — reducing latency. Minus — complicating the code (reuse, pooling). Under the hood, hotspot affects the allocation rate — a key performance metric.

mcache / mcentral / mheap (Go Allocator Architecture)

mcache / mcentral / mheap - Allocator Hierarchy 🏗️🧠, memory management levels of Go. . multi-level memory distribution system in Go

The Go allocator is built as a multi-level system:

- mcache — local cache for each P (processor)

- mcentral — global pool for each size class

- mheap — global heap, manages OS memory

When a goroutine does an allocation: first mcache is used (fast, lock-free), then if necessary — mcentral, and only then — mheap.

In Java the equivalent: TLAB (locally) → Eden → Old Gen.

// Go allocator scheme

Goroutine

|

v

mcache (locally, no lock)

|

v

mcentral (shared, with lock)

|

v

mheap (globally, OS memory)

Description:

- fast path: mcache

- slow path: mheap

Example (Go allocation)

package main

func main() {

// allocation of a small object

a := new(int) // most likely from mcache

// allocation of a large object

b := make([]byte, 10_000_000) // will go to mheap

_, _ = a, b

}

Example (Java equivalent)

public class Main {

public static void main(String[] args) {

Integer a = new Integer(10);

// small object — TLAB/Eden

byte[] b = new byte[10_000_000];

// large object may go directly to Old Gen

}

}

Understand that small and large allocations are handled differently. In Go, avoid frequent large allocations — they go directly to mheap and are more expensive. In Java, large objects may bypass Eden, increasing pressure on Old Gen. Under the hood, this is due to the fact that large objects are difficult to move and cache.

The architecture of the allocator is important when designing high-load systems. For example, buffers (byte[]) are better reused. In Go — through sync.Pool, in Java — through ByteBuffer pooling. Plus — reduces the load on GC/allocator. Minus — risk of leaks and complexity of management. Under the hood, this reduces the frequency of access to mheap or Old Gen, which is critical for latency-sensitive applications.

Stack Overflow Handling

Stack Overflow Handling - Overflow Rescuer 🧯📚, expands the stack under critical load. . handles stack overflow of the program execution

Stack overflow occurs when the call stack exceeds the available memory size. In Java, the stack is fixed for each thread (typically 512KB–1MB), and when it overflows, a StackOverflowError is thrown. The JVM does not try to "rescue" execution — this is a fatal error for that particular thread.

In Go, the approach is fundamentally different. Each goroutine starts with a small stack (~2KB), which dynamically grows as needed. When the stack fills up, the Go runtime automatically allocates a new, larger stack and copies the data there.

Under the hood, Go uses a stack splitting mechanism: before a function call, a check (stack check) is inserted to see if there is enough space. If not — runtime.morestack() is called, which increases the stack. This makes overflow a rare occurrence.

However, overflow in Go is also possible (for example, with infinite recursion), but it occurs significantly later.

Example: recursion (Go)

// Demonstration of stack growth in Go

package main

import "fmt"

func recursive(n int) int {

if n == 0 {

return 0

}

// each call adds a frame to the stack

return n + recursive(n-1)

}

func main() {

fmt.Println(recursive(100000))

// Go will dynamically enlarge the stack

}

Example: recursion (Java)

// Demonstration of stack overflow in Java

public class Main {

static int recursive(int n) {

if (n == 0) return 0;

return n + recursive(n - 1);

// each call increases the stack

// at a large depth -> StackOverflowError

}

public static void main(String[] args) {

System.out.println(recursive(100000));

// will most likely crash with an error

}

}

In Go, you should not rely on the "infinite stack" — despite dynamic growth, copying the stack requires resources. In Java, categorically avoid deep recursion. The reason is that the stack in Java is fixed and does not expand. Under the hood, the JVM simply checks the memory boundary, and when it exceeds it, it throws an exception. In Go, however, the runtime intercepts the situation in advance and reallocates the stack, which is more expensive but more flexible. Therefore, in both languages, it is better to use iterations where possible.

In Go, the dynamic stack allows for writing more "clean" recursive algorithms: parsers, tree traversals, DFS/BFS. In Java, such algorithms are often rewritten in iterative style using a heap stack (Stack<Integer>). Plus for Go — simplicity and scalability. Minus — hidden allocations during stack growth. Plus for Java — predictability, minus — depth limitation. Under the hood, Go optimizes the stack for millions of goroutines, while the JVM is optimized for thread stability.

Span (mspan in Go allocator)

Span (mspan) - Memory package of the allocator 📦🧠, a block of pages for distribution. . manages a group of memory pages in Go

Span (more precisely mspan) — is a key structure in the Go allocator. It represents a set of contiguous memory pages (pages), which are used to store objects of a single size class.

In Go, memory is organized as follows: mheap → mspan → objects. Each span contains blocks of the same size.

For example: a span for a size class of 16 bytes will contain many 16-byte objects. This reduces fragmentation and speeds up allocations.

In Java, the analog is region/heap space (Eden, Survivor), but the JVM does not use fixed size classes as explicitly. Instead, bump-pointer allocation is used.

// Span diagram

mheap

|

+-- mspan (size class 16 bytes)

| [obj][obj][obj][obj]

|

+-- mspan (size class 32 bytes)

[obj][obj][obj]

Description:

- each span = array of objects of the same size

- reduces fragmentation

Example: allocations in Go

package main

type Small struct {

a int32

}

func main() {

for i := 0; i < 1000; i++ {

_ = new(Small)

// objects will go into one span (one size class)

}

}

Example: analog in Java

class Small {

int a;

}

public class Main {

public static void main(String[] args) {

for (int i = 0; i < 1000; i++) {

Small s = new Small();

// JVM allocates in Eden space

// objects are not tied to size class directly

}

}

}

Understanding size classes in Go — is the key to memory optimization. If you create structures slightly larger than the threshold, they may fall into another class and increase memory usage. In Java, this is less critical, as the JVM manages the placement itself. Under the hood, Go uses spans, to avoid fragmentation, but the price is strict size boundaries.

Span is important in high-load systems: APIs, message brokers, real-time services. For example, if you have millions of small objects — they are effectively placed in spans. In Go, this gives high allocation speed. In Java, the analog is Eden allocation, which is also very fast. The plus for Go — control and predictability. The downside — possible internal fragmentation. Under the hood, span helps to avoid costly operations with mheap.

Page (Page Memory)

Page - Page Memory 📄🧩, minimal block of memory allocation. . basic unit of memory distribution in the system

Page is the basic unit of memory that the allocator receives from the operating system. In Go, the size of a page is usually 8KB.

mheap manages pages by combining them into spans. For example, a span can consist of several pages.

In Java, the JVM also works with pages of memory, but this is hidden from the developer. The JVM requests memory from the OS in large regions and divides them internally.

Under the hood, both systems use the OS's virtual memory (mmap / brk), but Go manages pages more explicitly.

// Page scheme

OS Memory

|

+-- Page (8KB)

+-- Page (8KB)

+-- Page (8KB)

|

v

Span

|

v

Objects

Description:

- page = basic block

- span = set of pages

Example: large allocations (Go)

package main

func main() {

data := make([]byte, 100000)

// allocates several pages

// combined into span

_ = data

}

Example: analog in Java

public class Main {

public static void main(String[] args) {

byte[] data = new byte[100000];

// JVM allocates memory from heap

// physically these are also OS pages

}

}

Do not abuse large allocations. In Go, they go directly into mheap and require page allocation. In Java, large objects can fall directly into Old Gen. The reason is that pages are an expensive resource managed by the OS. Under the hood, each request can trigger system calls.

Pages are critical in systems dealing with large amounts of data: file processing, streaming, ML. In Go, it is important to control the buffer sizes. In Java, use streaming API instead of loading everything into memory. Plus – efficient memory handling. Minus – complexity of management. Under the hood, correct page usage reduces pressure on the GC and allocator.

| Term | Go | Java | Comment |

|---|---|---|---|

| Runtime Scheduler | M:N (goroutines) | 1:1 (threads) | Go runtime manages scheduling and task switching itself, making it independent of the OS. This reduces the cost of context switching and allows scaling the system to millions of concurrent tasks. In Java, management is delegated to the OS, which increases overhead but provides more control through thread pools and OS scheduling. |

| Memory Barriers | atomic, channels | volatile, synchronized | Both platforms use CPU memory fences. In Java, there is a strict JMM with happens-before. In Go, the model is simpler but requires discipline. Under the hood, this protects against reorder and cache incoherence. |

| Alignment | manual control | hidden by JVM | In Go, you can optimize the layouts of structures to reduce padding. In Java, the JVM does this, but developers can influence it through annotations and layout. |

| Stack | dynamic | fixed | Go increases the stack as needed by copying it. Java allocates the stack upfront. This makes Go more flexible but adds overhead when growing. |

| Fragmentation | possible | minimal | Go does not move objects (non-moving GC), so fragmentation is possible. Java uses compaction — reduces fragmentation but causes pauses. |

| Hotspot | mcache | TLAB | Local allocation buffers reduce lock contention. But when overflow occurs, access to global structures slows down execution. |

| Allocator | mcache/mcentral/mheap | TLAB/Eden/Old Gen | Go uses a slab allocator with size classes. Java uses a generational heap. Different approaches but one goal — fast allocation. |

| Stack Overflow | rare | frequent in recursion | In Go, the runtime prevents overflow by growing the stack. In Java, this is a fatal error of the thread. |

| Span | groups of objects | similarly to heap regions | Span is a key element of the Go allocator. Java has no direct analogue, but Eden/regions play a similar role. |

| Page | 8KB blocks | hidden | Go manages pages explicitly. Java hides this behind the JVM but uses the same OS mechanisms. |

// General Go memory scheme

Goroutine

|

v

mcache → mcentral → mheap → OS pages

Java analog:

Thread

|

v

TLAB → Eden → Old Gen → OS memory

Description:

- Go focuses on lightweight concurrency and allocator control

- Java focuses on GC and automatic memory management

Conclusion

The main difference between Go and Java in terms of memory and runtime is the philosophy: Go bets on simplicity, predictability, and lightweight, while Java bets on a powerful but complex memory management system.

The Go runtime takes care of managing threads (goroutines), stack, and allocator, allowing the developer to think less about details but understand the constraints better. Java, on the other hand, offers a powerful GC, a complex memory model, and a rich toolkit, but requires a deeper understanding of JVM settings.

Practically, this means:

- Go is better suited for high-concurrency systems (microservices, network apps)

- Java is better for enterprise systems with a large amount of logic and complex memory

Key tips:

- Minimize allocations (in both languages)

- Understand GC behavior

- Avoid recursion in Java

- Optimize structures in Go

Under the hood, both platforms are solving the same problems: efficient memory usage, concurrency management, and minimizing latency. But they do it in different ways. And it is the understanding of these differences that makes you a strong engineer capable of choosing the right tool for the task.

Оставить комментарий

Useful Articles:

New Articles: